Few technological questions carry greater ethical weight than the future of autonomous weapons.

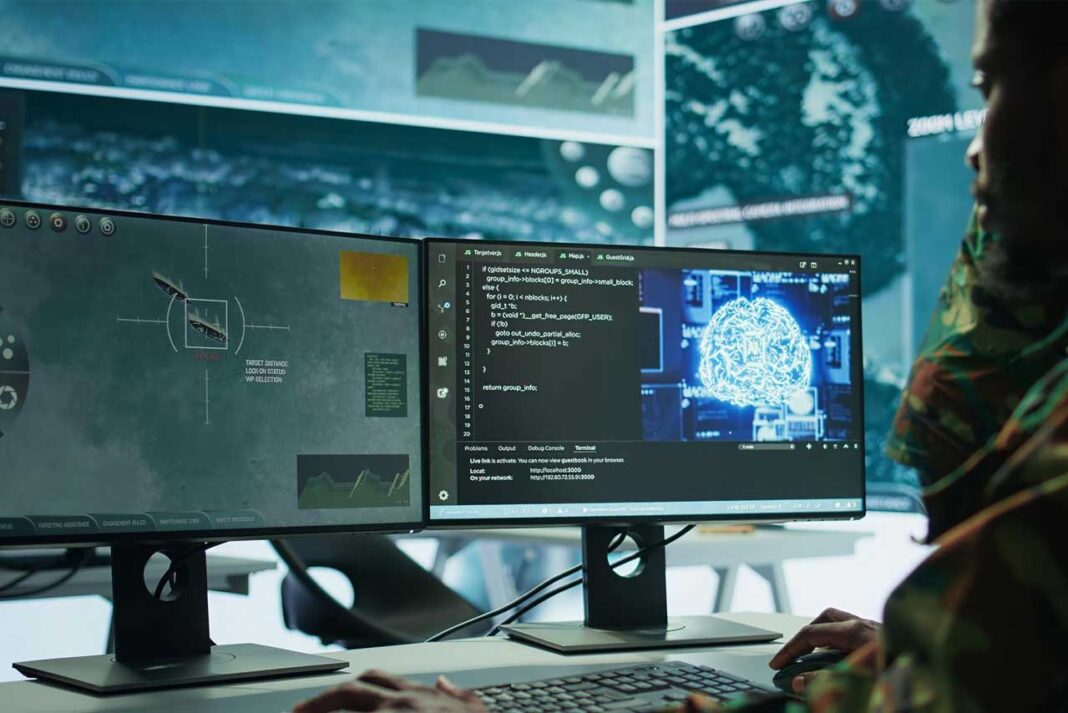

Across defense laboratories and military research facilities around the world, engineers are developing systems capable of identifying targets and launching attacks with minimal human intervention.

Supporters argue that autonomous weapons could make warfare more precise. Machines equipped with advanced sensors might identify threats more accurately than human soldiers operating under stress.

Proponents also point out that many military systems already contain elements of automation. Missile defense platforms, for example, must react within seconds to intercept incoming threats. Yet critics warn that delegating life-and-death decisions to machines introduces profound moral risks.

Human judgment, they argue, remains essential in determining when the use of force is justified. Algorithms trained on imperfect data could misidentify targets or fail to account for complex ethical considerations.

The prospect of autonomous weapons also raises concerns about accountability.

If an AI-controlled weapon makes a mistake, who bears responsibility? The programmer who designed the algorithm? The military commander who deployed the system? The government that authorized its development?

International organizations and human rights groups have begun advocating for treaties banning fully autonomous weapons systems. Others argue that prohibitions would be difficult to enforce because the underlying technologies overlap with civilian AI research.

As artificial intelligence advances, the debate will only intensify. The question is not merely technological. It is philosophical. Human societies must decide whether machines should ever be entrusted with the authority to take a human life.